Recently I moved to a service provider and for the past few months have been looking at a multi-site, next-generation Private Cloud design and how it will scale over the next 5 to 10 years. This is a high level view of the functional components and how they fit together.

Recently I moved to a service provider and for the past few months have been looking at a multi-site, next-generation Private Cloud design and how it will scale over the next 5 to 10 years. This is a high level view of the functional components and how they fit together.

Business Requirements

These are the business requirements that apply and are self explanatory:

- Fastest Time to Market

- Reduce Operational Costs

- Increase Revenue

- Improve Business Agility

- Deliver SLAs of 99.9% to 99.999% (Non-critical, Business Critical and Mission Critical services)

Which then translate into the following technical requirements:

- 99% Virtualisation

- Maintain the same IT Division Headcount over time

- Proactive Operations (not Reactive)

- Deliver Active-Active Multi-Site Availability of 99.9% to 99.999%

- No Single Point of Failure

- Scalable Performance

- Service Catalogue Portal for all IT Services with pre-configured policies for compute, network, storage and security

- IP version 6 only

- Enhanced security with micro-segmentation of Infrastructure

- Most efficient use of budget

Private Cloud and EUC for the Very Large Enterprise

The diagram below shows the summarised logical design. End User Computing is included to show inclusion with the IT Service Management layer.

There are five major layers to this design with the logical building blocks that have the following functions:

1. IT Service Management Layer

- Unified IT Service Management – presentation layer for end-to-end IT service management, including workflows and API calls to the other four layers. This level of abstraction reduces the complexity of the Cloud Management Layer.

2. Cloud Management Layer

Each of these management systems has south-bound APIs to manage the physical and virtual infrastructure and north-bound APIs for connecting to the IT Service Management layer.

- Cloud Management Platform – drives the software defined data center, each application blueprint has fixed policies for compute, network, storage and security.

- Advanced Operations Management – Predictive analytics, alerts and reporting of applications and infrastructure.

- Virtual Infrastructure Management – Centralised management and orchestration platform for virtual infrastructure.

- Application Provisioning – Guest OS integrated orchestrator that provides guest OS and application control which is used for automatically building, operating and maintaining the server infrastructure.

3. Management Layer

Each of these management systems has south-bound APIs to manage the physical and virtual infrastructure and north-bound APIs for connecting to the IT Service Management layer.

- End User Computing Management

- Storage Management

- Security Management

- Network Management

- Converged / Hyper-Converged Infrastructure Management

4. Virtualisation Layer

- Server Virtualisation – abstraction of CPU, RAM, network and storage resources with a hypervisor that can be driven by policy.

- Network Virtualisation – abstraction of L2 and L3 resources with a hypervisor that can be driven by policy.

- Storage Virtualisation – abstraction of data resources with a layer that can be driven by policy.

- Security Virtualisation – abstraction of L4 to L7 services with a hypervisor that can be driven by policy.

- Business Continuity / Active-Active Data Centers – metro storage clustering that allows non-uniform access to compute, network and storage resources. Network latency and bandwidth is critical to MSC, requires careful analysis and design.

- Backup / Recovery – Application consistent, Database consistent, File system and VM image level backups.

- End User Computing – Desktop virtualisation, Application virtualisation, Physical desktop management and Mobile Device Management.

5. Physical Layer

- Converged Infrastructure – pre-configured and pre-tested pods of compute, network and storage. Provides medium density of compute that provides the platform for business critical and mission critical workloads. Important to partner with a vendor who supports scale-out of deployed pods.

- Hyper-Converged Infrastructure – integrated compute and storage hardware with a storage virtualisation layer. Delivers the highest density of compute and storage that provides a platform for non-critical and EUC workloads.

- Physical Network – Routing, IETF TRILL, IEEE SPB, non-blocking ECMP, Clos-type Leaf and Spine switched network.

- Physical L4-L7 – Services such as Network Filtering, Application Filtering, Intrusion Prevention Systems, IPSec and SSL VPNs, Advanced Load Balancing etc.

Compute, Network and Storage Density

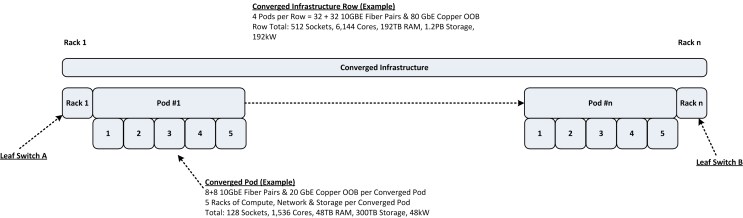

It is important to calculate the expected compute, network and storage resources per Data Center. This will be driven/limited by the power and cooling capacity of the data center facility. Assign each row a function – hyper-converged, converged infrastructure or common services. Add up the row totals to find the projected data center total from a CPU sockets, CPU cores, RAM, network port, storage and power perspective.

Build the fundamental hyper-converged rack with hyper-converged servers and multiply that by the number of racks in the row, minus the racks dedicated to the leaf switches. Hyper-converged infrastructure is the densest hardware configuration, by a factor of approximately 4 times when compared to converged infrastructure.

Do the same with converged infrastructure pods.

Public Cloud for the Service Provider

The design described here is primarily a private cloud for a very large enterprise organisation, however it could be adapted to the public cloud as well. However, one of the biggest business requirements for a public cloud is to reduce the cost to the tiniest margin possible. So that opens the door to selecting open source solutions such as OpenStack, OpenCompute and OpenDayLight. Obviously this saves on the cost of licencing and infrastructure, however it increases the operational cost of developing, operating and maintaining the solution, since you are on your own.

The Data Center Facility

I have covered this in a previous post “Data Center Facility Strategy”, so I will not belabor the point. Suffice to say, for the modern data center to support high density computing (OpenCompute, Converged and Hyper-Converged infrastructure), it will have some or all of the following characteristics:

- Designed for 20+kW per rack

- N+1 or Radial UPS (Generator and UPS function is integrated into one unit)

- Optimum reduction of the PUE requires DC power instead of AC (covered in OpenCompute)

- Hot/Cold Aisle Containment with In-Row Cooling

- Overhead power and structured cabling

- Minimum or no raised floor (depends upon routing of chilled water pipes)

Other Resources